It is crucial to acknowledge that misinformation is not just an act of fate – it is very much instrumentalised by political and other actors having something to gain from the destabilisation of democratic systems. Today’s information society landscape, filled with filter bubbles and echo chambers (Pariser, 2012), also provides a fertile breeding ground for populist actors thriving in an environment enwebbed with misinformation. In the face of the upcoming 2024 European elections – which, amongst a wide range of other topics, will also define the future of research in Europe – one must be alert to the threat misinformation poses. The spread of misinformation is often helped by large masses of unsuspecting victims, who – perhaps due to socioeconomical, psychological or personal reasons – fall into a “rabbit hole”. The fight against the sources, perpetrators and profiteers of misinformation, as well as ensuring that private social media platforms take responsibility, is an ongoing policy challenge. However, engaging with and helping people who fell victim to misinformation, or ensuring that science is communicated in a way that prevents the public from falling on this slippery slope, is something inclined scientists and educators can potentially take upon themselves.

Question of viewpoints

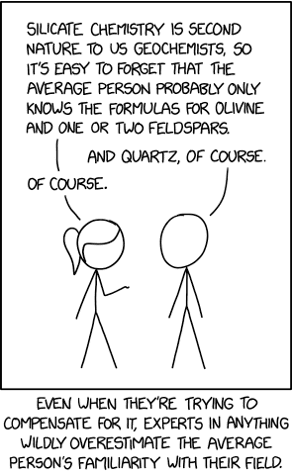

In order to wrap our head around how significant crowds of people are more than willing to subscribe to outlandishly false ideas, I invite you to take a step back, and attempt to assess the “bubble of science” as a layperson. And then, following the advice of the below comic (Figure 1), take another step back, as your assumption of a layperson’s knowledge may be still more scientific that you would assume.

With this, I mean not to imply the “stupidity” of our theoretical layperson – even if they are believers of crackpot theories. Rather, I wish to emphasize how scientists, after interacting a lot with other colleagues and experts, may have their perception skewed regarding how well someone outside of these circles understands their subject areas.

Narratives

Think about the time – years of studying, research, work experiences – it takes to amass all the knowledge you may have in the field of your expertise. This knowledge gives context to scientific results – but for those, who pursued other activities, this context may be missing. For example, the graph a mass spectrometer prints out: for chemists, it makes sense, but for the layperson it’s just a piece of data, a point with no beginning and no end. What does have a beginning and an end can become a story. Scientific facts may tell their stories to those who understand them but for our imaginary layperson, we have to provide a full story – not just the facts. Humans tend to think in narratives (Hammack, 2011) – and sadly, common misinformation tropes excel in them, while actors communicating scientific evidence have room to improve – in fact, narratives in science may be intentionally eliminated by the scientific community, to uphold an air of professionalism and objectivity (Paidan, 2018). Meanwhile, misinformation may use actual scientific facts, but put them into a context, that tells a misleading story. Or, it may just propagate half-true, or false information to create a convincing narrative. To counter the former, one has to be sure that the proper context is given when findings are communicated, and with regards to the latter, we have to look into what makes a good story to identify potential shortcomings in science communication.

Underdogs

Let’s examine a common trope, which, if we interacted with a victim of misinformation, we may have heard in some variation:

“The [perceived powerful actor, eg. government/media/WHO] lies to you. We are in opposition and we are being silenced – but we know the truth. That’s why they oppress us.”

A fair example of the “underdog” narrative. And since the story of David and Goliath, people tend to sympathise with a protagonist who is going against the current, and fighting against all odds (an “underdog”). There are many admirable, positive examples of this, such as Slumdog Millionare or 12 Angry Men. In case of misinformation, however, the spreaders – either willingly, in a cynical manner, in order to influence others, or unwillingly as a victim – position themselves as the underdog only to garner sympathy and additional followers. To counter this, the communication of science must not be the current the underdog is fighting against, the Goliath to the David. Removing the perception of academic “ivory towers”, and the appearance of authority/superiority is an important step into this direction. Often, the voice of science is communicated in an authoritative manner (Lemke, 1990). A softer version of the authoritarian voice is the voice of a “nanny” – instead of orders, this voice somewhat may appear condescending, or infantilising for the victims of misinformation, such as stating “it is for your safety” – but refusing to further elaborate. Actively promoting opportunities for further engagement in a horizontal way, such as through providing opportunities for dialogues, where people can ask and receive responses in an accessible way, rather than a top-down, ordering perspective may help people to not only accept these concepts, but also to see the rationale behind them.

Uncertainty, breach of trust

Finally, it is crucial to effectively communicate uncertainty. This can be especially challenging, due to the previously discussed concept of people thinking in narratives – which often comes with a simplistic worldview. Stories have heroes and villains, truths and lies – and importantly, continuity. However, such binary thinking can’t be afforded in science – or its communication – as scientific paradigms change over time (Kuhn, 1962). In the coronavirus pandemic, new findings came in a rapid succession as scientists learned more and more about the virus – and in accordance with these findings, rules and policies also changed rapidly (Crow et al. 2023) – making policies, in certain cases, not fully coherent. Scientific results and corresponding guidance change in light of the discovery of new evidence. However, if that previous finding was positioned and communicated as an indisputable truth, such changes may result in a breach of trust in science. Scientists, of course, are aware of the inherent uncertainties, but if they are not explicitly highlighted when needed, the public discourse may come up with broad statements equating science with truth, disregarding its changing nature. While at the first glance, emphasizing uncertainty may appear counterproductive, as it could erode trust, a 2020 study (van der Bles et al.) showed that when results were communicated in a way that highlighted potential shortcomings, it not only increased how trustworthy they were found by readers, but in addition it also appeared more genuine and less authoritative, thus remedying the issue highlighted in the previous paragraph.

Conclusion

There is no silver bullet in the fight against misinformation. Different actors can, and must address different aspects of the issue in different ways. While political and private actors can implement policy that counters misinformation, the scientific community also has an opportunity to engage with the matter.

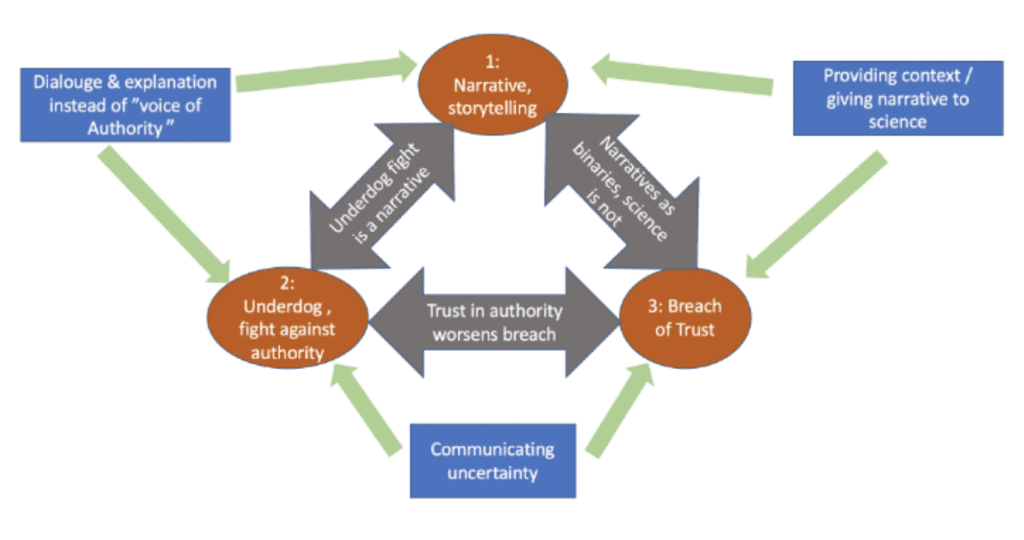

Misinformation spreads successfully due to multiple interconnected reasons, some of which I have highlighted above. These reasons are not distinct – neither are the ways we can counter them.

As summarised in the above figure, misinformation campaigns often offer coherent and accessible narratives opposed to how scientific information can lack context. Such scientific facts are needed to provide guidance in policy. Yet, if they are left without elaboration, the guidance based on them may be characterised as “appeal to authority”, against which proponents of misinformation can position themselves as underdogs. The trust in blatant misinformation can be reinforced by the breach of trust in actual science – in case uncertainty is not effectively communicated, the change of scientific perspectives may further erode trust in science, thus giving more platform to misinformation. To counter these, the scientific community must consider the internal logics of misinformation (Polleri, 2022) and build a scientific narrative that responds to it in an inclusive and accessible way. This narrative should also avoid authoritative voices, and potentially pave ways towards dialogue, in a way that considers flaws and uncertainties as well.

Sources:

Crow, D. A., DeLeo, R. A., Albright, E. A., Taylor, K., Birkland, T., Zhang, M., Koebele, E., Jeschke, N., Shanahan, E. A., & Cage, C. (2022). Policy learning and change during crisis: COVID‐19 policy responses across six states. Review of Policy Research, 40(1), 10–35. https://doi.org/10.1111/ropr.12511

Hammack, P. L. (2011). Narrative and the politics of meaning. Narrative Inquiry, 21(2), 311–318. https://doi.org/10.1075/ni.21.2.09ham

Lemke, J. L. (1990). Talking Science : language, learning and values (p. XI). Ablex.

Padian, K. (2018). Narrative and “Anti-narrative” in Science: How Scientists Tell Stories, and Don’t. Integrative and Comparative Biology, 58(6). https://doi.org/10.1093/icb/icy038

Pariser, E. (2012). The filter bubble : how the new personalized web is changing what we read and how we think. Penguin Books.

Polleri, M. (2022). Towards an anthropology of misinformation. Anthropology Today, 38(5), 17–20. https://doi.org/10.1111/1467-8322.12754

van der Bles, A. M., van der Linden, S., Freeman, A. L. J., & Spiegelhalter, D. J. (2020). The effects of communicating uncertainty on public trust in facts and numbers. Proceedings of the National Academy of Sciences, 117(14), 7672–7683. https://doi.org/10.1073/pnas.1913678117

xkcd comics. (2021). Average Familiarity. Xkcd. https://xkcd.com/2501/